Key Takeaways

- LLM hallucinations exist because current models generate plausible text from patterns rather than verified knowledge

- Interfaces make hallucinations worse by presenting guesses with the same confidence as verified information.

- Thoughtful UX can reduce the impact of hallucinations by flagging uncertainty, providing transparency, and adapting responses based on risk.

- Developers can make LLMs more trustworthy by designing interfaces that clearly communicate uncertainty, indicate when the model is extrapolating, and guide users toward verifying answers.

- Developers should implement risk-aware design and human-in-the-loop checks in high-stakes contexts.

- Users can protect themselves by asking for sources, double-checking critical information, and treating LLMs as assistants rather than authorities.

We live in a world where LLMs can generate impressive business plans, marketing copy, product descriptions, and even technical documentation and code, all in the blink of an eye.

Yet they still hallucinate, with some more than others. These hallucinations often show up as fabricated facts, case studies that never existed, and misinformation that spreads faster than any attempt to set the record straight ever can.

As if that wasn’t bad enough, we keep getting the same response: we need better models, more data, longer context windows, better prompts, more fine-tuning, stronger guardrails, more compute. While all these are helping, as they’ve reduced hallucinations in newer models, LLMs overall haven’t become more reliable in the true sense of the word.

Therefore, I think it’s fair to say that, despite all of the improvements made over the past few years, hallucinations aren’t going away. In fact, they most likely never will completely.

Why LLMs Hallucinate

We already addressed this topic in a previous blog post, but one thing worth reiterating is that LLMs function more like sophisticated auto-complete engines than true reasoning systems. While they have an impressive vocabulary and can generate text that seems plausible, they don’t actually “know” anything and lack any form of self-awareness. As a result, sometimes they’re right, other times they’re wrong — and when they’re wrong, they usually sound convincingly right.

So, if you’re waiting for an LLM that doesn’t hallucinate, you’re basically waiting for a different kind of technology that doesn’t exist yet. Instead of treating that possibility as a potential future fix, it makes more sense to focus on the core problem behind LLMs’ hallucinations, because understanding what’s at the root could actually help us address hallucinations the right way and move past endless workarounds towards real solutions.

Looking Beyond Quick Fixes

“Fixing” LLM hallucinations is one of the hottest topics right now, and for good reason. While some fixes do help, most of them only offer surface-level solutions that don’t acknowledge the core cause of hallucinations. That core cause is simple: LLMs are built solely to recognise patterns in data. This is something no amount of fine-tuning can change. Therefore, eliminating hallucinations isn’t a matter of better prompts or minor tweaks but of deeper infrastructure improvements.

That being said, a question we should ask ourselves is whether the real problem is that LLMs hallucinate or that we keep designing and presenting LLM models as if hallucinations weren’t a major issue.

Admitting the latter changes the perspective: hallucinations point not only to a technical limitation but also to a UX design failure rooted in how LLM outputs are presented and interpreted by users.

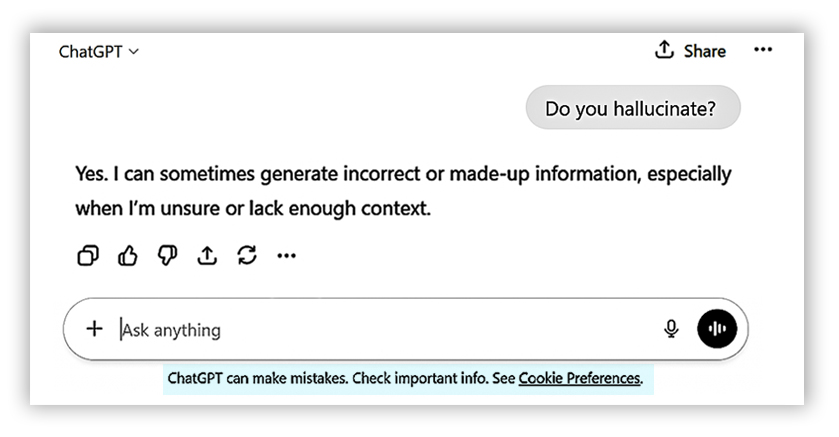

In other words, the real issue here isn’t that models sometimes fabricate but that users (are led to) believe that everything an LLM says is true and accurate. Even though LLMs now include disclaimers that they can make mistakes, as shown in the image below, most users still trust their outputs blindly.

This is partly because of how the information is transmitted: LLMs respond instantly with polished prose, rarely express doubt proportional to their actual uncertainty, and don’t provide any visual cues to help people distinguish between things they “know” well versus things they’re essentially guessing about. This creates an illusion of knowledge and competence that doesn’t match the reality.

Therefore, the solution right now isn’t necessarily to try to stop LLMs from hallucinating, which is an impossible target with the systems we currently use, but to help users better distinguish confidence from certainty, guesses from grounded answers, and what’s “possible” from what’s “verified”.

LLM Hallucinations: An UX Problem No One Wants to Own?

Here’s the quiet part almost none says out loud: every LLM answer looks equally confident. Whether it’s a well-sourced historical fact, a creative idea, or a total fabrication invented just moments ago, you see the same font, the same tone, and the same confident delivery. At the same time, the interface doesn’t show how ambiguous the query is, how the answer is generated, or how confident the system actually is.

On top of that, humans aren’t just terrible at questioning robot overlords that communicate confidently in full sentences, but we also bring our own cognitive biases into nearly every interaction with the technology.

Because one of those cognitive biases is our tendency to trust information that appears authoritative, we often anchor on the first answer an LLM gives simply because it’s expressed in a fluent, confident tone. Many people also assume that LLMs are more objective than we are, and therefore more likely to be correct.

That’s how we end up citing their suggestions in our work without double-checking and sometimes deferring to them in disagreements, trusting the “machine’s judgment” over our own. We even let them influence our decisions, taking their advice on which movie to watch, what recipe to try, which exercise routine to follow, and even which business idea or investment to pursue. All of this happens even though we know their output could be completely fabricated or subtly misleading. And that’s only because LLMs’ fluency and confidence creates a false sense of authority and trust.

While all of this may come as a surprise, none of it is new. After all, it’s basic human psychology. And we have to admit this wouldn’t necessarily be a problem if LLMs were always right and their interfaces didn’t actively amplify our biases. But they do. Then, when something goes wrong, we blame the model. Yet the model didn’t design the interface. We did.

What’s more surprising is that we keep building these interfaces where a guess and a verified answer are visually indistinguishable, and then wonder why users struggle to tell them apart.

That being said, hallucinations aren’t dangerous simply because they exist. They’re dangerous because the interface presents them with the same authority as facts.

If the interface said, “I’m not sure, but here’s a possible answer”, most hallucinations would be mildly annoying rather of harmful. Instead, the answers we’re getting right now are delivered with the confidence of someone who checked their sources, even though they didn’t. This is where UX matters more than model improvements. Because in this particular situation, UX is the only tool developers could use to signal uncertainty, set the right expectations, and force users to slow down when the stakes are high.

Practical Steps Developers Could Take

There’s plenty developers can do to reduce the impact of LLM hallucinations. It starts with being honest about what these systems can and can’t do, and then designing the interface to match that reality. Here are a few ways to make it happen.

- By Calibrating Confidence Signals – Some systems, like ChatGPT or Claude, already hint at uncertainty but not consistently. Since users need to know when an LLM is guessing, interfaces should flag uncertain answers, use words like “likely”, “unclear”, or “no reliable sources found”, and make it obvious when the model is extrapolating rather than retrieving from data. While this makes the product feel less “magical”, it also makes the potential effects of hallucinations far less dangerous. Colour coding, tags like “verified”, “likely”, or “uncertain”, or the option to set alerts when the model is speculating can help put hallucinations into context without confusing users.

- By Using Explicit Interaction Modes – Anyone who’s used an LLM knows that not every task needs the same kind of response. Creative writing, research, or brainstorming all require different approaches. Currently, users can steer an LLM through custom configurations, built-in modes, or different models, but these options vary by platform and aren’t always obvious.

- By Designing for Transparency – Simply adding a source link at the bottom of an answer, as most LLMs do today, is not enough. Most people won’t click it, and some will assume it just proves the whole response is correct. Instead, interfaces should do more, such as explaining what the source actually supports, mentioning when no relevant sources were found, and admitting gaps instead of filling them with confident nonsense. In that case, the most trustworthy answer would be: “I don’t know”, as it could help users make better decisions.

- By Integrating Human-in-the-Loop Checks – In high-stakes areas like healthcare, finance, or law, human verification should be part of the workflow, not an afterthought. If an LLM quietly acts as an authority, that’s not innovation; it’s negligence dressed up in tech branding. In these cases, the interface should insert checkpoints for human verification, flag answers when the model isn’t confident, and provide tools to escalate uncertain or high-risk responses to experts. This will frame the LLM as an assistant, not an authority, which it isn’t anyway.

- By Implementing Risk-Based Design – Not all hallucinations carry the same risk. For instance, a made-up metaphor might be exactly what makes a poem stand out. A hallucinated medical guideline, on the other hand, could have disastrous consequences. This means hallucinations should be treated as a risk management problem, not a simple “good vs bad” one.

Given this, an LLM’s interface should scale with risk. For example, in low-risk contexts, such as creative exploration, the LLM can prioritise flexibility, creativity, and fluency. In medium-risk contexts, like research, users need transparency, verified outputs, and uncertainty flagged when necessary. In high-risk situations involving safety-critical decisions, strict verification, constraints, and human oversight are essential. While matching the interface to the level of risk and user expectations would make LLMs more useful and trustworthy, using the same interface everywhere is what keeps users from reliably identifying hallucinations.

What You Can Do Today

Even if you’re not designing the interface, you can still reduce the risk of hallucinations or spot them easier. Here are a few ways to do that:

- Ask for sources or explanations – Whenever possible, prompt the LLM to explain its reasoning or provide references. If it can’t give a clear source or explanation, treat the answer cautiously.

- Look for uncertainty cues – LLMs rarely flag when they’re guessing. However, if you see words like “likely”, “unclear”, or “I’m not sure”, take them as a hint, since the answer could be wrong.

- Double-check critical information – For anything important, such as medical, financial, or legal advice, always verify the answer independently.

- Use multiple queries – If you’re unsure whether an answer is correct, ask the same question in different ways or use another LLM if you can. Inconsistencies can reveal hallucinations.

- Treat the LLM as a collaborator, not an authority – Use prompts to get suggestions instead of definitive answers, and let your judgment have the final say.

As discussed in this blog post, if your LLM replies with absolute confidence while quietly guessing half the time, the problem isn’t the model. The problem is that the interface is “lying” on its behalf. Because of this, hallucinations aren’t just mistakes or a bug we can fully eliminate. They’re a limitation we have to design around.

The teams that accept this reality, instead of chasing perfection, are the ones building systems people can actually trust. And when you think about it, trust is far more valuable than pretending an LLM is always right.

Extra Sources and Further Reading

- Large Language Models Hallucination: A Comprehensive Survey – Cornell University https://arxiv.org/abs/2510.06265

This paper provides a detailed academic overview of the causes, types, detection, and mitigation strategies for hallucinations in LLMs. - What Are AI Hallucinations? — IBM Think

https://www.ibm.com/think/topics/ai-hallucinations

An authoritative explanatory guide from IBM on what hallucinations are in the context of AI/LLMs, why they occur, and why they persist. - AI in Law: The Real Risks Beyond Hallucinated Cases – Cornell Law School

https://publications.lawschool.cornell.edu/jlpp/2025/04/09/ai-in-law-the-real-risks-beyond-hallucinated-cases/

This article explores LLM hallucination risks in high‑stakes domains (like legal research), detailing why hallucinations matter when AI is used in professional settings. - Hallucination‑Free? Assessing the Reliability of Leading AI Legal Research Tools – Stanford Law

https://dho.stanford.edu/wp-content/uploads/Legal_RAG_Hallucinations.pdf

This paper examines how RAG‑based AI tools perform in real research tasks, including how often they hallucinate even when grounded in real data.