Key Takeaways

- Some risks are not obvious and build up quietly over time, often without triggering alarms. AI may pose a similar slow-burn threat that unfolds gradually.

- The biggest risk from AI may not be a sudden catastrophe. It could be the steady outsourcing of decision-making and mental effort, which quietly changes how we think.

- Unlike industrial hazards, there are currently no agreed-upon tools or metrics to measure cognitive exposure to AI. This leaves society in a pre-instrument phase, largely unaware of what’s happening.

- We must start observing and measuring AI’s long-term effects now, asking hard questions early, and creating safeguards to ensure we guide AI’s integration rather than letting it reshape our minds (and futures) without oversight.

We fear car accidents. We fear plane crashes. We fear earthquakes. We fear potential nuclear disasters. These are the kinds of risks that dominate our imagination mainly because they can happen at any moment, which makes them feel constantly present. But what many people fail to realise is that not all risk looks like that. Sometimes, risk accumulates quietly, almost invisibly, often over long periods of time, building up in ways that never trigger an alarm until it’s far too late.

If you’re wondering what all this has to do with AI, the connection becomes clearer when we understand that AI risk looks less like a car accident or plane crash and more like something far more subtle. Even though most fears about AI currently tend to fall into two familiar camps — either it will take most jobs or, in the most dramatic framing, it will threaten civilisation itself — that isn’t what matters most. What matters most is how it will unfold. To better understand how this type of risk develops, here are a few illustrative examples:

- Asbestos Exposure – For decades, workers handled asbestos every single day. They cut it, drilled into it, and mixed asbestos-containing cement and insulation compounds on site, often without any protective gear, because nothing obviously bad was happening at the time. The damage, however, was unfolding in slow motion. Fibres lodged deep inside workers’ lungs, inflammation built up quietly, scar tissue formed gradually, and breathing became harder by degrees rather than overnight. Only years later did diseases like asbestosis and mesothelioma become clearly linked to that daily exposure, and by then, the harm had long been done.

- Hand-Arm Vibration Syndrome – Jackhammers and grinders seemed like miracle tools when they first appeared on construction sites. Making work faster and far more efficient, it’s no surprise they spread quickly. But over time, many workers developed Hand-Arm Vibration Syndrome, more commonly known as white finger.

- Lead Exposure – Lead seemed like the perfect material for pipes. At the time it was widely used, people didn’t know that long-term exposure could damage health, particularly in children. Decades later, the consequences became painfully clear.

And we can extend the list even further to industrial noise exposure, smoking, even seemingly safe office work. In all of these cases, the conclusion is the same: something doesn’t need to be harmful in a single exposure to be dangerous. Harm can build up slowly, quietly, invisibly, and when it does, the cumulative effect over time can be devastating, forcing society to rethink something that once felt unquestionably beneficial.

Across the aforementioned examples, one variable that changed the outcome dramatically was duration of exposure. If we were to express that idea in a simple equation, we might simplify it as:

Risk = Severity × Likelihood × Duration.

Without duration, risk looks manageable, even acceptable. But once you factor in sustained exposure over years, the equation changes completely. What may seem small becomes systemic. What seems tolerable becomes dangerous.

The Hidden Risk of AI

When it comes to AI risk, here’s an uncomfortable question that rarely gets asked: what if the bigger risk isn’t a single catastrophic event that may or may not happen, but something much slower and quieter, something that accumulates over time, much like the long-term effects of exposure to asbestos, mechanical tools, lead, industrial noise, and smoking?

What if, in the case of AI, the risk isn’t about one bad answer, one misuse, or one dramatic failure but about constant reliance on instant synthesis, about hundreds of answers we accept without checking because it’s faster, about dozens of tiny decisions we offload to it because we trust its judgement more than our own, and last but not least, about countless small moments where we choose convenience over mental effort? Individually, each of these may feel trivial. But collectively, they form a pattern worth paying attention to.

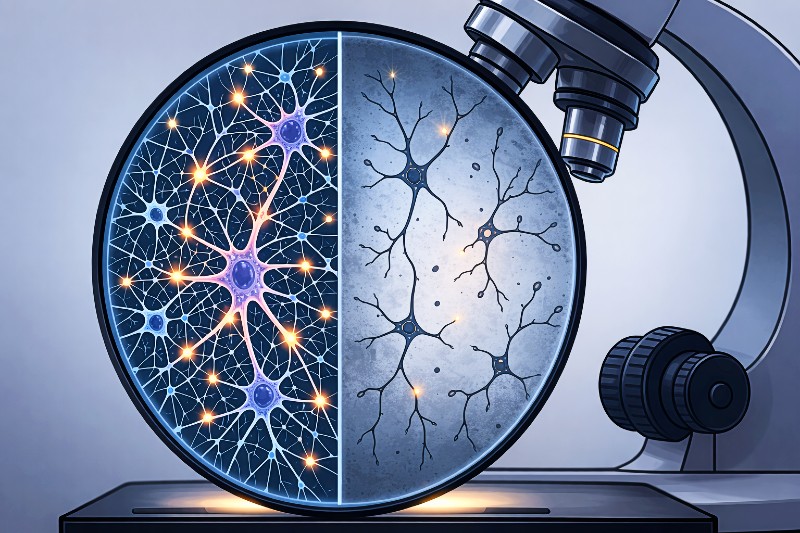

Where does it all lead? Well, some researchers and cognitive scientists have already been raising concerns about cognitive atrophy for a while now. Simply put, consistently outsourcing mental effort could gradually reshape how our brains actually work. If we zoom out historically, we’ve seen similar debates before. When the internet and smartphones emerged, experts warned that search engines might weaken our ability to retain information and that smartphones could erode our attention span. At the time, those concerns sounded alarmist to many. And yet, in hindsight, they weren’t entirely wrong.

Seen through the same lens, the idea that outsourcing thinking to AI could reshape our cognitive habits no longer feels radical. It feels plausible.

But what makes this especially unsettling is that we currently have no reliable way to measure it. There are no widely accepted metrics. No instruments. No clear baselines. It’s just us, slowly handing over more and more mental bandwidth while assuming everything is fine because nothing visibly breaks.

When Harm Has No Metrics

With asbestos, we eventually tracked disease rates. With industrial noise, hearing tests were developed and exposure thresholds were set. With vibration tools, we introduced exposure limits and monitoring protocols. Each slow-harm industry eventually built instruments needed to detect what had previously been undetectable.

But with AI, what’s the equivalent? A cognitive audiogram? A longitudinal reasoning index? A population-level attention metric? A professional deskilling threshold?

The uncomfortable truth is that we simply don’t know. We have no agreed definitions for cognitive exposure, no shared understanding of what safe reliance levels might look like, no long-term degradation signals, and no early warning indicators. And yet, at the same time, we’re rolling out AI at population scale, embedding it into work, education, healthcare, and everyday personal decisions, without having even defined what cumulative harm would look like.

And no, that’s not a criticism of any individual company or regulator. It’s just a structural observation. An important one, in my opinion. Because you can’t regulate what you can’t measure. You can’t insure what you can’t quantify. And you can’t govern what you can’t detect.

But here’s the real issue: unlike every other industry that eventually faced slow-harm realities, we haven’t even agreed on which risk indicators we should be measuring. In many ways, we’re flying blind and somehow calling it innovation.

There’s something else worth considering too. While the comparisons to the cumulative risks that unfold gradually highlight an important pattern, they can also be oddly reassuring, because they imply that, if something goes wrong, we’ll identify the problem and respond to it.

But what if AI doesn’t follow that script? What if it turns into a diffuse, chronic phenomenon, which evolves so slowly that we may not notice it until it’s too late? Even then, we might already be so accustomed to it, even dependent on it, that instead of recognising harm, we may simply choose to adapt to it.

So, rather than fighting back, we might rely on AI even more, verify less, delegate judgement more often, and quietly normalise the outsourcing of cognition, simply because it feels efficient and harmless.

And because cognitive atrophy won’t come with physical discomfort, it might not even feel real. There will be no coughing. No numb fingers. No ringing in the ears. Just subtle shifts in how we think, reason, and decide, shifts that may not even feel like we’re losing anything at all until they’re already well established.

The Measurement Problem

The safety frameworks in different industries are built around unexpected situations. That makes sense because the experts designing these frameworks tend to focus on the most pressing questions, such as: What are the catastrophic scenarios that could happen? Who will be liable? How do we prevent worst-case outcomes?

Although these are some reasonable starting points, long-term exposure problems don’t behave like acute ones. And cognitive exposure to AI is even more unusual because it sits at the intersection of psychology, sociology, and technology.

This kind of risk forces us into questions that are far harder to answer, including: What are the long-term effects of daily AI use? At what threshold does harm begin, and how would that threshold even be established? Would it vary from person to person? Based on what? Intelligence? Creativity? Profession?

Is anyone actually monitoring the potential cumulative impact? And even if they were, what kind of data would count as proof of degradation in the first place?

If we don’t start answering these questions and building measurement systems soon, we risk repeating the same pattern that has played out time and again in slow-harm industries: first comes mass adoption, then delayed recognition, followed by retrospective regulation. And eventually, a future generation asking the same haunting question: “Why didn’t anyone see this coming?”

The uncomfortable possibility here isn’t only that AI will cause a dramatic societal shift. It’s not even that it will quietly reshape how we think or build skills. It’s that it might gradually take over the cognitive heavy lifting entirely.

That means AI will do the thinking and reasoning, and we’ll simply execute its decisions. While some may see that as progress, I’m afraid it wouldn’t feel that way. Quite the opposite, in fact, it would feel more like societal collapse.

At that point, the real risk may not even be rebellion or obsolescence, but downright substitution, with AI becoming the “human” that thinks while we become the “robots” that follow.

If humans start behaving like robots, we eventually face an uncomfortable implication: we may no longer be needed in the way we assume. After all, no one needs “machines” that require rest, food, medical care, and holidays if more efficient alternatives exist.

Before the Instruments Exist: AI’s Current Stage

Every new industry has what you might call a “pre-instrument” phase, which is a stage where people sense that something is changing, but the evidence is mostly anecdotal, measurement tools don’t exist yet, and institutions hesitate because proof feels incomplete or too fragmented to act on.

That, quite possibly, is exactly where AI sits today: before the metrics, before any rigorous analysis, before any exposure limits.

History shows that the most dangerous technologies aren’t always the ones that crash or explode. Sometimes the real danger is what slips seamlessly into daily life, the tools that integrate so smoothly we stop questioning them, the systems that feel helpful enough to bypass scrutiny. And once something becomes invisible through familiarity, honest evaluation of long-term effects becomes very difficult.

So perhaps the real question isn’t whether AI could be dangerous in theory. It’s whether we’ll build the tools fast enough to spot subtle shifts in how we think before the changes are only visible in hindsight.

Because history is very clear on one thing: by the time we notice the changes, they may already be deeply ingrained and extremely difficult, if not impossible, to reverse.

That’s why waiting for proof isn’t a good strategy. We need to start observing and measuring more if we want to steer AI’s integration rather than be steered by it.

None of this is an argument against progress. Because the solution isn’t to halt innovation; it’s to remain vigilant, ask the hard questions early, and create the tools that let us see the invisible. Otherwise, the legacy we leave might be one where we outsourced not just tasks, but the very essence of human thought.

Extra Sources and Further Reading

- From Tools to Threats: A Reflection on the Impact of Artificial-Intelligence Chatbots on Cognitive Health – PMC

https://pmc.ncbi.nlm.nih.gov/articles/PMC11020077/

In this paper, the authors introduce AI‑chatbot‑induced cognitive atrophy, suggesting that over‑reliance on AI may reduce critical thinking, memory, and problem-solving. They call for further research to understand and measure these long-term cognitive effects. - AI’s Cognitive Implications: The Decline of Our Thinking Skills? – IE University

https://www.ie.edu/center-for-health-and-well-being/blog/ais-cognitive-implications-the-decline-of-our-thinking-skills/

This article explains that frequent AI use can weaken critical-thinking skills by encouraging cognitive offloading, and it offers strategies to preserve independent reasoning. - Staying Sharp: Practical Strategies for Preserving Cognitive Skills in the Age of Generative AI – Philanthropy https://philanthropy.org/staying-sharp-practical-strategies-for-preserving-cognitive-skills-in-the-age-of-generative-ai/

This article offers practical strategies for preserving cognitive skills in the age of generative AI, warning that over‑reliance on AI tools can weaken critical thinking and other core abilities.