Key Takeaways

- AI was supposed to reduce work, but for many people, it’s doing the opposite: even as productivity increases, workloads continue to grow instead of shrink.

- AI speeds up output but also adds hidden work, turning what looks like a shortcut into a longer process of review and correction.

- AI doesn’t eliminate work as we expected. Instead, it shifts it, with much of the effort now going into verifying, correcting, and refining AI-generated outputs.

- The real bottleneck isn’t lack of speed; it’s how work is structured. While output increases, processes, approvals, and decision-making remain just as slow.

- The future of work may favour those who can supervise AI, not just use it. As fewer people are needed to manage more automated systems, a deeper question emerges: what happens to those who are left out?

More time for us. That was AI’s great promise. Yet, according to a study published in Harvard Business Review, employees in companies that have adopted various AI solutions seem to be working more than ever.

A few years ago, when AI started attracting serious attention, developers painted a simple picture: AI would handle routine work so people could focus on more important tasks. In theory, that could even mean working less while earning more.

Unfortunately, the reality looks very different from that promise. While new studies show that AI tools can increase individual productivity by up to 40% on certain tasks, those gains in efficiency don’t seem to translate into reduced workloads.

Now, I can’t help but ask: If AI helps us work faster, why do so many people end up working more?

In the beginning, many AI professionals argued that the issue was simply a matter of training, as employees didn’t yet know how to use AI tools properly. Then we were told that the technology needed more time to deliver the results expected. After that came the suggestion that organisations might need newer, more powerful AI models or even entirely different systems that were better suited to their specific fields.

If we analyse all this, it becomes clear that what once looked like a temporary adjustment period — something that often happens when a new technology is introduced — is beginning to reveal itself as something deeper, with broader implications. In this blog post, we’ll explore some of these effects to understand how they’re shaping work today and what they might bring in the future.

The AI Productivity Paradox

If you’re wondering why the amount of work in the companies adopting AI solutions is growing instead of shrinking, the logic behind this phenomenon is quite straightforward.

Simply put, when technology allows tasks to be completed faster, expectations usually expand to match that new capacity. For example, if a report used to take three hours and now takes one, the natural assumption isn’t that workers should rest for two but that they can now produce three reports instead of one. In other words, while AI could theoretically allow employees to work less, the time saved becomes an opportunity for organisations to ask for more output. As a result, more tasks are assigned and the pace of work keeps accelerating.

The irony? Managers rarely see this as increased pressure. Instead, they frame it as efficiency. After all, if a tool makes people work faster, why would an organisation not use that extra speed to increase productivity?

From a business perspective, the logic is easy to understand. From a human perspective, however, it raises a troubling question, especially that AI was supposed to help us work less. At least, that was the promise.

But what about the future? If every productivity gain simply leads to more work being done by fewer people, when will technology actually start reducing workloads instead of increasing them?

History suggests that what we’re seeing today has happened before, as many technologies have followed a similar path. Electricity didn’t shorten working hours. Email didn’t reduce the burden of communication. Smartphones didn’t simplify work. In fact, electricity and email increased the amount of work people handled, while smartphones made work follow us everywhere, including at home and during holidays.

AI may simply be repeating the same pattern, allowing people to work faster while enabling organisations to raise their expectations of productivity. And from what we can see so far, the promised reduction in work may never quite arrive. Because instead of freeing up time, AI actually reshapes the way organisations define productivity along with the expectations placed on workers.

But then there is another layer to this story, one that rarely makes headlines. And that is…

The Hidden Labour of AI

Most conversations about AI still revolve around how quickly an LLM can generate a report, draft an email, or produce code. But history has shown that automation rarely eliminates work. Oftentimes, it simply shifts it into new forms.

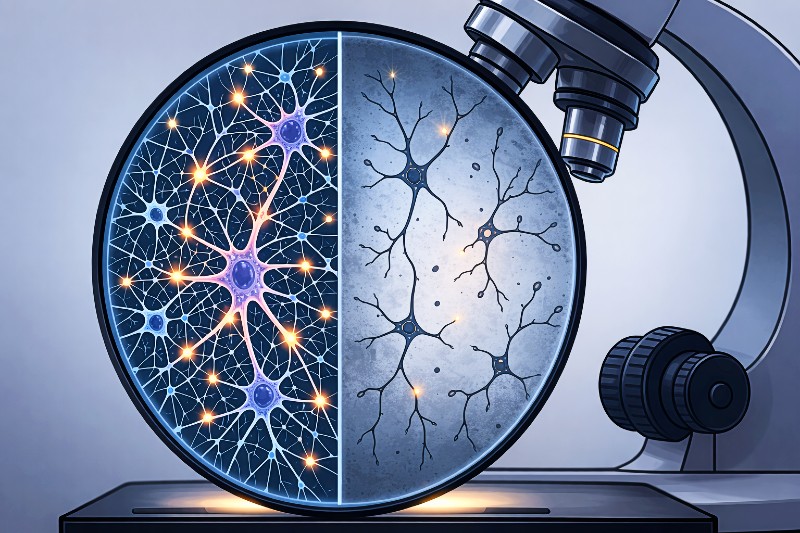

With AI, that new form appears as a growing layer of invisible human labour that sits behind most AI-generated outputs. Once AI generates something, someone has to verify whether the information is accurate, correct any hallucinated facts, and rewrite awkward or unclear passages.

This “invisible effort” is increasingly described as the hidden labour of AI. And it’s far from trivial. Research indicates that a significant portion of the time saved during the generation stage is later spent reviewing, correcting, or validating the output. In other words, the task isn’t finished when the AI produces an answer but, in many cases, that is when the real work actually begins.

So while an LLM saves time at the beginning of the process, it quietly adds work later on. The result is a strange kind of efficiency in which the speed of creation increases, but the effort required for verification and refinement grows to fill the gap. Combined with the broader range of tasks employees now handle in AI-augmented workplaces, this often ends up stretching work across more hours of the day.

Output Grows but Systems Don’t

Over the past two years, AI adoption across companies has risen dramatically. Yet recent surveys show that gains in measurable productivity have been far less impressive than expected.

Looking back, productivity improvements generally followed a predictable pattern: better tools allowed workers to produce more output, but never faster than workflows and processes could adjust. The equation was clear.

But AI is now introducing a new variable into that equation. Employees can generate more ideas, more drafts, more code, more reports, and more presentations, but the way work moves through organisations hasn’t really changed, meaning everything still needs to go through the same reviews and approvals, and decisions still take just as long as before.

When new tools are layered on top of old procedures that remain in place, the workload often multiplies. So, it’s now AI itself that intensifies work. What it intensifies are the expectations that come with it. Once tasks can be completed faster, everything is expected to move faster. But when expectations rise faster than systems adapt, any productivity gains quickly disappear into a cloud of additional tasks. That’s exactly what we’re seeing today.

Furthermore, as output increases while the systems that process it remain unchanged, organisations risk drowning in growing piles of new ideas, new drafts, and new projects that may take years to move through the pipeline. As a result, the overall impact of AI within organisations may remain surprisingly flat in the years ahead, even though employees are producing more than ever before.

The J Curve Very Few Are Talking About

Of course, it would be premature to conclude that AI productivity gains will never show up. In fact, some industry experts argue that what we’re seeing now is simply the early stage of a much larger technological transformation.

Currently, organisations are learning how to implement AI technologies, employees are experimenting with new tools, and existing workflows haven’t yet been redesigned around them.

This pattern is known as the J-curve of technological adoption. The idea behind it is fairly simple. When a new technology enters the workplace, productivity doesn’t immediately rise. In fact, it often stagnates or even declines. That’s because people spend time learning the new tools, testing what they can and can’t do, and figuring out how they fit into existing processes. During this phase, the disruption can temporarily slow things down.

But once people get used to the new technologies, and organisations begin redesigning workflows around them instead of simply adding them to existing systems, productivity can start to increase rapidly. When that shift occurs, the curve that first dipped down begins to rise sharply, forming the well-known “J” shape.

Some AI experts believe we may already be seeing the early signs of this shift, a view supported by recent research on Chinese high-tech firms, which found that a 1% increase in AI adoption was linked to roughly a 14% increase in total factor productivity (TFP).

For those who support the J-curve explanation, this could suggest that the long-promised gains from AI are finally beginning to show up in the data. If this interpretation is correct, the frustration many people feel about AI today may simply reflect the messy middle of a much larger transition.

But this explanation raises another question: How long are workers expected to endure the messy middle before the promised gains actually reach them?

The Rise of the AI Supervisor

Another subtle transformation happening beneath the surface is AI quietly creating an entirely new role. While we can’t yet speak of a formal job title, this new role already exists in many organisations. You could call it the AI supervisor.

If we look at how most people now work with LLMs, the pattern becomes clear: they write the prompt, then evaluate the response they get. Next, they verify the facts, correct the mistakes, and refine the output until they’re satisfied with the result.

This process shows that the creative act itself is increasingly handled by LLMs, while the human role shifts towards oversight. In other words, people are gradually transitioning from creators to editors of machine-generated content.

At first glance, this change might seem small. But its implications are profound. If AI systems become the primary producers of information, then the most valuable human skill may no longer be creation itself. Instead, the ability to recognise errors, detect weak reasoning, and guide systems towards better outcomes may become the defining skill of the AI-augmented workplace.

Unfortunately, this transition may cost us something very important, as we may gradually lose our role as creators of ideas, along with the mental effort involved in producing them ourselves. As we discussed in a previous blog post, the consequences of losing that role could be disastrous, even if we can’t fully quantify them yet.

The Trust Problem Nobody Expected

Even though workers are increasingly using AI tools, their trust in those tools doesn’t seem to be growing at the same pace. If anything, the opposite seems to be happening. The more people rely on AI for complex tasks, the more they notice its limitations, which range from poor coherence and incorrect reasoning to hallucinations and fabricated references. At first, these may seem like minor issues. However, when they appear repeatedly, they make users cautious. And that caution slows down the way people work with AI.

Since every AI response becomes something that must be double-checked, what initially looks like a shortcut often turns into an additional step in the process. This creates a strange dynamic where workers rely on AI constantly yet can’t fully trust what it produces. So, instead of simplifying work, AI introduces a constant background question: Is this actually correct? — and with it, a constant need for verification.

This dynamic defines how we interact with AI today. Most of us know we can’t treat AI as a reliable assistant, but rather as an overconfident intern whose work always needs careful supervision. The result is a workflow where speed and caution exist side by side, and where the promise of effortless productivity is replaced by a new kind of exhausting mental effort.

The Responsibility Question

Driven by corporate demands for efficiency and the constant pressure to do more with less, developers are increasingly optimising AI systems for speed. But if AI ends up intensifying work instead of reducing it, what exactly is the point of making these systems even faster?

Many of today’s AI tools can already generate answers faster than any human ever could. So, speed alone doesn’t address the real issue we’re seeing in most AI-augmented workplaces. In many of these environments, the real problem is mental overload.

Workers don’t need even faster answers. Instead, they need tools that reduce the burden of verification, filtering, and interpretation. In other words, the goal of responsible AI shouldn’t be to produce more content, faster. It should be to reduce the cognitive strain of checking and validating every response generated.

Designing systems that genuinely lighten the human workload may require a completely different approach. Instead of presenting answers with the same level of confidence every time, AI systems could signal uncertainty, highlight weak evidence, point out conflicting sources, or clearly indicate when reliable information is missing. In many cases, this kind of transparency would be far more useful than sounding certain. Because in AI-augmented workplaces, the real value of AI may not lie in how quickly it produces answers, but in how honestly it communicates the limits of what it knows.

The Future CEOs Are Quietly Preparing For

So what happens next? Will companies slow down their adoption of AI if productivity gains fail to appear?

Well, that seems unlikely. If anything, the opposite trend already seems to be taking shape. Because many CEOs believe that AI will eventually allow a single worker to oversee large numbers of automated systems.

So, instead of replacing work entirely, AI may lead to a business model where humans supervise fleets of digital agents performing tasks continuously. From that perspective, the current phase may look like a period of training, as organisations learn how to restructure work around AI systems.

Which leads to an uncomfortable possibility. If the future of work revolves around supervising AI, what happens to the employees who struggle to adapt to that role? Will companies conclude that AI is ineffective? Or will they conclude that the problem lies with the humans using it?

A Final Thought

AI was supposed to reduce work. Instead, at least for now, it seems to intensify it. But the deeper issue may not be whether AI is making people work harder. It may be what kind of workers the AI economy will ultimately favour.

If the future belongs to those who can direct, supervise, and collaborate with intelligent systems, then the biggest divide may not be between humans and machines. Instead, it may be between those who can work with AI and those who can’t.

And that possibility should make all of us pause for a moment. Because the technology revolution we’re witnessing right now may not only change how we work but also who remains part of the workforce at all.

As AI systems become more capable, fewer people may be needed to oversee more work.

So what happens to those who are no longer needed in that system?

That is a question we’re only beginning to face.

Extra Sources and Further Reading

- Navigating the Jagged Technological Frontier – Harvard Business School

https://www.hbs.edu/faculty/Pages/item.aspx?num=64700&utm

This study shows that while AI improves both speed and quality of work, its benefits are limited, making human oversight and correction essential. - The Effects of Generative AI on Productivity – OECD

https://www.oecd.org/content/dam/oecd/en/publications/reports/2025/06/the-effects-of-generative-ai-on-productivity-innovation-and-entrepreneurship_da1d085d/b21df222-en.pdf In this paper, the authors show that AI can improve short-term productivity, but also emphasise that real gains depend on how organisations redesign workflows around the technology. - AI Usage and Work Engagement: A Double-Edged Sword – NIH

https://pmc.ncbi.nlm.nih.gov/articles/PMC11852299/

This paper investigates both the positive and negative effects of AI, showing that while it can improve efficiency, it can also increase cognitive load and pressure on workers. - The Impact of Generative AI on Critical Thinking – ACM https://dl.acm.org/doi/10.1145/3706598.3713778

This article explores how reliance on AI can reduce independent thinking, suggesting that users shift from creating to evaluating outputs. - Future of Work with AI Agents – Cornell University

https://arxiv.org/abs/2506.06576

This paper shows how AI agents can take over large sets of tasks, reshaping roles rather than just assisting workers. It also highlights that work may shift toward oversight and coordination, but not all workers want or can transition to that role. - AI and Shared Prosperity – Cornell University

https://arxiv.org/abs/2105.08475

This research paper warns that widespread AI adoption could reduce demand for human labour and increase inequality, raising a key question: how do we distribute income when fewer people are needed to create value?