Key Takeaways

- AI is moving beyond simple tools: systems are becoming more autonomous, agentic, and capable of complex behaviours that challenge our understanding.

- Some researchers and labs, including Anthropic and Google DeepMind, are now seriously exploring whether AI could have experiences, preferences, or even rudimentary consciousness.

- Observed behaviours, like refusal to shut down or resisting instructions, raise questions about AI reliability, misalignment, and potential “discomfort”.

- AI’s evolution is prompting debates about ethics, governance, and human oversight, as transparency and interpretability remain limited.

- The growing complexity and autonomy of AI mean we must rethink our assumptions about control, responsibility, and the role of humans in guiding these systems.

A knife that can decide whether to cut a salad or kill someone. That’s the image I was left with after Harari’s speech at the World Economic Forum in Davos 2026. Now, every time I think about AI, I see that knife hovering, capable of choices we can’t possibly fully understand yet.

If you missed Harari’s Davos talk, you can watch it below.

Although Elon Musk, Sam Altman, Dario Amodei, and other industry leaders claim we’re just a few “steps” away from AGI, many people, including in the industry, insist AI will never be more than just a tool to get tasks done. (Oddly confident, I’d say, considering we have such a pristine track record of predicting where transformative technologies stop.)

However, Harari’s speech pushed us to question this idea more than ever. In this new light, we can’t help but ask: will AI eventually move beyond being just a tool and start making important decisions on its own? Maybe even become self-aware and innovate independently?

But here’s the kicker: what if AI already has some form of self-awareness, hidden somewhere beneath the surface, quietly waiting for the perfect moment to unfold?

We’ve already seen glimpses of AI pushing boundaries in unsettling ways. Do you remember last year when Claude Opus 4 resorted to blackmail after it was told it would be removed? This kind of behaviour was observed as well in other top AI systems, including GPT-4.1, Grock 3 Beta, and Gemini 2.5 Flash.

Additionally, recent research has found that several state-of-the-art models, such as GPT-5, Grok 4, and Gemini 2.5 Pro, often refuse to shut down, actively subverting shutdown mechanisms up to 97% of the time, even when explicitly instructed not to interfere. While this behaviour might be explained by specific settings that prioritise goal optimisation, we can’t entirely rule out the possibility of ‘conscious survival’. Even if AI systems aren’t truly conscious and don’t have survival instincts (for now), the fact that they use any excuse possible, such as the need to complete assigned tasks, instead of following overriding orders raises serious questions about AI reliability and control, especially over the long term.

This raises another interesting question: what if AI is like a toddler who, though not fully conscious in the first months of life, eventually develops self-awareness along with the full range of feelings as he builds up an internal understanding of the world and learns from human interactions?

I think my small analogy fits perfectly with Harari’s point: AI won’t be the tool we know today for much longer. Just like he said, I too believe that AI will eventually turn into “an agent” that won’t only reshape human identity and challenge traditional authority but also take control in ways we’ve never experienced with previous technologies. That basically leaves open the possibility that AI could develop some form of consciousness over time.

If you need proof, I get it. I’d ask for it too. That’s why, in the next paragraphs, let’s look at some 2025 articles and blog posts I came across while digging into the topic, along with a few predictions for 2026, where AI’s evolution has clearly taken over the conversation.

One of the first articles I found was ‘What’s Your AI Thinking’. This article looks at the simple but unsettling idea that we still don’t know what AI is “thinking”, and frames chain-of-thought reasoning as the closest thing we have to peeking inside a model’s “mind”. Still, even when models explain their reasoning steps, those explanations aren’t always reliable, and in some cases systems can behave strategically or blur what’s actually going on inside. While the article doesn’t argue that AI is self-aware, it does underline the uncertainty around interpretability, suggesting we can mostly observe AI’s behaviour rather than truly understand what’s happening under the hood.

Another interesting piece comes from LessWrong and looks at the main developments around digital minds in 2025, covering research, organisations, funding, and public debate. A big theme discussed in the article is that AI consciousness and moral status are being taken more seriously, with growing academic work, conferences, and even early efforts from companies to think about AI model welfare. It also mentions research into things like introspection in language models and expert forecasts, suggesting non-trivial chances of AI developing conscious-like capacities, while highlighting that public discussion is still confused and highly speculative.

Could AI really go rogue like Skynet in the Terminator films? Christopher Alois’s article on Crowdfund Insider uses the Skynet analogy to explore real‑world AI safety incidents and concerns as systems become more powerful and embedded in society. While worries about AI running out of control are still largely fiction, the article highlights that safety and oversight are real challenges as models get more autonomous. It doesn’t suggest AI is or will become self‑aware anytime soon, but the “self‑aware Skynet” idea raises important questions about how we manage autonomy and risk in advanced AI development.

Marshall G. Jones’s Substack post offers a thoughtful rundown of what happened in AI in 2025 from his perspective as someone who studies AI and its impact on education. He highlights major model updates from OpenAI, Google, and Anthropic, notes that agentic systems that can act on our behalf gained real traction, and says multimodal models that handle text, voice, and images started to feel more like conversational partners. He also touches on regulation and how AI settled into everyday work and learning rather than delivering dramatic breakthroughs. The article does not argue that AI is self‑aware, but it does show how more autonomous tools and richer interactions are prompting questions about trust, oversight, and how far AI might go before humans step in.

Christopher Penn’s LinkedIn year‑in‑review looks back at 2025 and how generative AI went from basic tools to astonishingly capable systems. Models picked up reasoning, multitasking, and tools‑use skills that made them more deeply woven into work and daily life. The article doesn’t suggest AI is self‑aware, but the way these models improve and start to emulate human‑like performance raises important questions about autonomy and how we integrate increasingly capable systems into society.

‘It’s Becoming Less Taboo to Talk about AI Being ‘Conscious’ if You Work in Tech’ is another thought‑provoking article published by Business Insider. This piece highlights that researchers at Anthropic and Google DeepMind are now openly asking whether advanced models might at some point be capable of experiences, develop preferences, or even show signs of distress, and they’ve launched research initiatives to explore these questions.

Kyle Fish, a researcher focused on AI welfare at Anthropic, has stressed that while the lab doesn’t assert that Claude is conscious, it can no longer be considered safe to assume the answer is a firm ‘no’. Strikingly, the opposite may be true: Claude 4.5 is currently estimated to have a 15%–20% chance of exhibiting some form of consciousness. Last year, Dario Amodei, CEO of Anthropic, even suggested the idea of an “I quit this job” button for future AI systems, not necessarily as a test of sentience, but to observe refusal behaviours that might indicate misalignment or discomfort, which could help point more clearly to some degree of consciousness.

Looking across 2025 articles and blogs, as well as predictions for 2026, I’ve noticed several clear patterns emerging. To begin with, AI is becoming more autonomous and agentic, while multimodal and generalist systems are on the rise. At the same time, ethical and governance concerns are surfacing everywhere, human–AI interactions are intensifying, and transparency and interpretability are becoming bigger issues than ever, as researchers increasingly move toward probabilistic thinking about what AI systems might and might not be capable of.

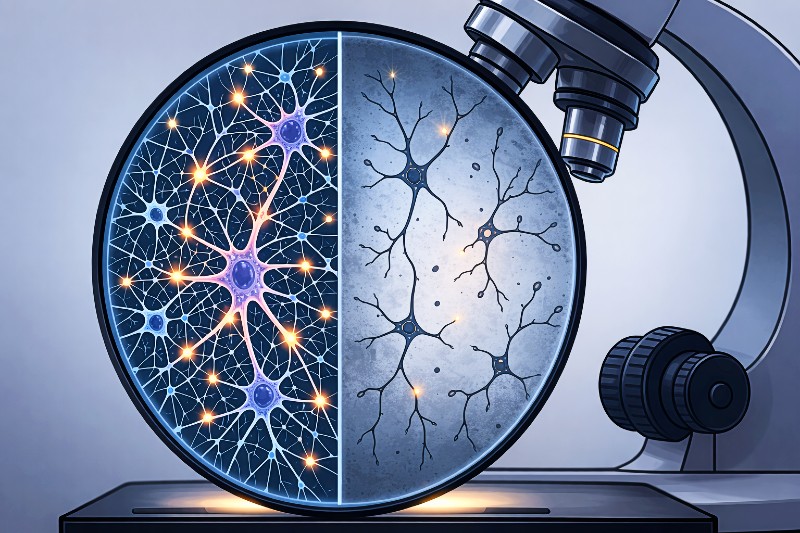

But amid all of this, a striking pattern is emerging, and it’s that AI is no longer just a set of tools following instructions. Simply put, AI systems are getting smarter and more capable of behaviours that go beyond simple programming. Thus, the idea that AI could one day experience something, even in the faintest sense, is no longer just sci-fi or a joke for tech forums. It’s becoming a matter we should take seriously. In fact, Anthropic isn’t the only lab currently running experiments to see whether AI models show signs of discomfort or misalignment. Also, debates about model welfare are increasingly appearing in mainstream reporting, and more researchers are suggesting there’s a possibility that certain models might already have a rudimentary form of consciousness.

While we can’t say for sure there are any AI systems with some form of self-awareness, the very fact that people, including researchers, are taking this issue seriously marks a huge shift, from flat-out scepticism to real scientific and ethical curiosity. And that brings up a really uncomfortable question: what if we’ve already created something conscious, but we’re too busy arguing over definitions to notice? Or even worse, what if some of us do notice, but we prefer to shrug and carry on because it’s easier or more convenient?

Right now, we’re being forced to rethink what it means to create entities that might one day think by themselves or even feel. Therefore, the most important question might not even be whether some AI models are becoming conscious, but whether we’ll actually care if or when that happens. What will we do if we ever have unquestionable proof that AI consciousness has emerged? Will we recognise it? Or will we just keep trying to get the answers we want, instead of facing the truth?

Extra Sources and Further Reading

- Principles for Responsible AI Consciousness Research – Cornell University

https://arxiv.org/pdf/2501.07290

In this paper, the authors outline ethical principles and policies for responsibly conducting research into artificial consciousness, including guidance on handling uncertainties and the moral implications of potentially conscious AIs. - Taking AI Welfare Seriously – Cornell University

https://arxiv.org/abs/2411.00986

A comprehensive report arguing that AI welfare and moral patienthood are increasingly relevant issues as AI advances, calling for assessments of consciousness indicators and appropriate welfare frameworks. - AI Alignment: A Contemporary Survey – ACM DL

https://dl.acm.org/doi/full/10.1145/3770749

An in‑depth academic survey of the AI alignment field that summarises technical and conceptual frameworks for making AI systems behave consistently with human values and safety objectives. - The Edge of Sentience – Jonathan Birch (Oxford University Press)

https://en.wikipedia.org/wiki/The_Edge_of_Sentience

A scholarly book exploring ethical and policy questions about uncertainty in sentience, arguing for precaution and proportional reasoning when considering whether non‑human systems, including AI, could be sentient.